Run AI on your production data.

With full control.

Give your AI agents the isolation, transactional guarantees, and rollback they need to build, validate, and ship data pipelines on production data.

AI agents can iterate on code, but not on your data

Code is local and reversible. Data pipelines are not.

Pipelines mutate shared state and failures leave production inconsistent. Traditional data platforms assume slow, manual change.

Bauplan is the execution layer built for fast, AI-generated iteration in production.

The missing execution layer that lets AI

work safely on production data

Work with data the same way you work with code

Everything in Bauplan is code, versioned in your repository and executed from your IDE. AI-generated changes run exactly as written, with no hidden state or manual steps.

Bring your AI coding assistant: we provide the safe execution layer.

Git-style safety for AI agents on production data.

Let AI agents work directly on production data without risk. Runs are isolated, publishes are atomic, and failed or bad changes can be rolled back immediately. Tests and expectations can gate publication before anything reaches your production tables.

Use cases

from assessing the feasibility of a request to building and maintaining production pipelines at scale.

Building data pipelines

Agents and engineers build data pipelines like software: write transformations in code, run them in isolation against real data, and publish only validated results.

Safe table ingestion

AI agents diagnose pipeline failures, replay runs against the exact state that produced them, and propose fixes in isolated branches you merge when ready.

Debug & fix pipelines

Ingest new data into an isolated branch, validate it with quality checks, and publish atomically only when it passes.

Data exploration and discovery

Let agents run hundreds of profiling queries, inspect schemas, and sample rows across isolated branches to build a complete picture of your data.

Integrations

Read more about Bauplan integrations in our docs.

A whole data platform in your repo

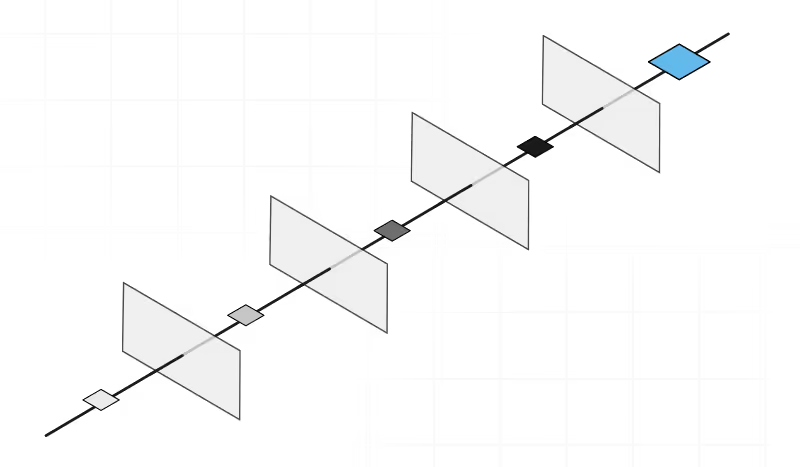

Branch, inspect and merge data like code

Bauplan models the state of your data as branches and commits. Create branches, run changes, inspect history, and merge only when tests are passed.

Native Python execution. No infrastructure to manage.

Pipelines are ordinary Python and SQL functions. Declare environments and quality checks in code. Execution is managed by the platform.

One control loop for humans and agents

A few predictable primitives for developer and AI agents. Every workflow follows the same loop: branch → run → inspect → merge.

Latest from our blog

FAQs

Great! Bauplan is built to be fully interoperable. All the tables produced with Bauplan are persisted as Iceberg tables in your S3, making them accessible to any engine and catalog that supports Iceberg. Our clients use Bauplan together with Databricks, Snowflake, Trino, AWS Athena and AWS Glue, Kafka, Sagemaker, etc.

Bauplan consolidates pipeline execution and data versioning into one workflow: branch, run, validate, merge. You can keep S3 and your orchestrator; you remove a lot of cluster complexity and glue. For example, an Airflow DAG that spins up an EMR cluster, submits Spark steps, then runs an AWS Glue crawler to refresh the Glue Data Catalog before triggering downstream jobs becomes: Airflow triggers a Bauplan run on an isolated branch that writes Iceberg tables directly to S3.

Your data stays in your own S3 bucket at all times. Bauplan processes it securely using either Private Link (connecting your S3 to your dedicated single-tenant environment) or entirely within your own VPC using Bring Your Own Cloud (BYOC).

No. Bauplan is just Python (and SQL for queries). That why your AI assistant can immediately write Bauplan code with no problem.

Bauplan allows you to use git abstractions like branches, commits and merges to work with your data. You can create data branches for your data lake to isolate data changes safely and enable experimentation without affecting production, and use commits to time-travel to previous versions of your data, code and environments in one line of code. All this, while ensuring transactional consistency and integrity across branches, updates, merges, and queries. Learn more.