Move Fast and Don’t Break Things: AI-Native Development for Intella Aerospace Pipelines

“We are a startup, Claude Code adopters, and we need to move fast, but the space industry has incredibly high standards for reliability. Bauplan branches gave us the confidence and speed during development, and correctness guarantees in production.”

Results

- Fast and safe development: The full data lifecycle (ingestion, transformation, data quality) was scaffolded by Claude Code in a single session rather than weeks.

- Correct-by-design lakehouse: Git-for-data ensures that neither humans nor agents can corrupt tables or publish half-written pipelines.

About Intella

Intella is a space technology company that develops a satellite operations platform that detects anomalies in satellite telemetry through machine learning.

Satellite operators rely on Mercury to monitor their fleets and ensure that communications and data services remain reliable and available.

Satellites produce a large stream of sensitive telemetry data, requiring robust, scalable infrastructure, and precise guarantees for consistency across the full data life-cycle: ingestion, data pipelines, predictions.

Painpoints at glance

- AI-assisted development without guardrails: AI coding agents can scaffold pipelines fast, but without isolation and domain knowledge, they risk hallucinating logic or corrupting production tables.

- Speed vs. safety: Intella needs to ship custom pipelines fast, but the space industry has zero tolerance for bad data.

- Bad data reaches downstream models: Without quality controls embedded in the pipeline, invalid values and duplicates slip through unnoticed, but bad data means bad predictions.

The Challenge: move fast and meet space-grade reliability

As Intella onboards new clients, they need to ship custom ingestion logic and modeling improvements fast but without ever risking the production assets that partners rely on. In the space industry, reliability isn’t negotiable, so “move fast” can’t mean “break things.”

When they move from development to production, the proverbial “garbage in, garbage out” of ML failure is not acceptable: without strict data quality controls, invalid values can slip into the pipeline unnoticed. Bad data leads to bad modelling, which ultimately results in bad predictions that may erode the trust in the platform.

The Solution

Intella adopted Bauplan to bring git-like isolation to their data infrastructure. The combination of a “correct-by-design” lakehouse and Claude Code created a workflow where pipelines could be built rapidly without any risk, and shipped to production with built-in quality checks: move fast and don’t break things.

Confidence and speed during development

Data engineers are used to the standard trade-off: change things slowly and safely, or quickly but dangerously. Intella engineers were among the first to try out our Skills on a real-world code base: built for a Claude Code-style development setup, skills are simple markdown files that encode domain knowledge about lakehouse workflows, so that LLMs can scaffold production-grade pipelines without hallucinating and while following platform-specific best practices.

What made this practical in a real codebase was Bauplan’s branch-based isolation: engineers could iterate with AI on ingestion logic and transformations on a clone of the lakehouse, validating outputs end-to-end without putting production at risk.

The first AI-assisted pipeline followed a medallion architecture: raw telemetry lands in bronze tables, then a transformation cleans, deduplicates, and validates data into silver tables ready for training. As data quality is “just code”, Claude can encode quality standards directly in a pipeline as simple Python functions.

For Intella, the pipeline that Claude Code built reduced duplicate rows and enforced clean numeric ranges across all columns, all automatically verified before a single row could reach production.

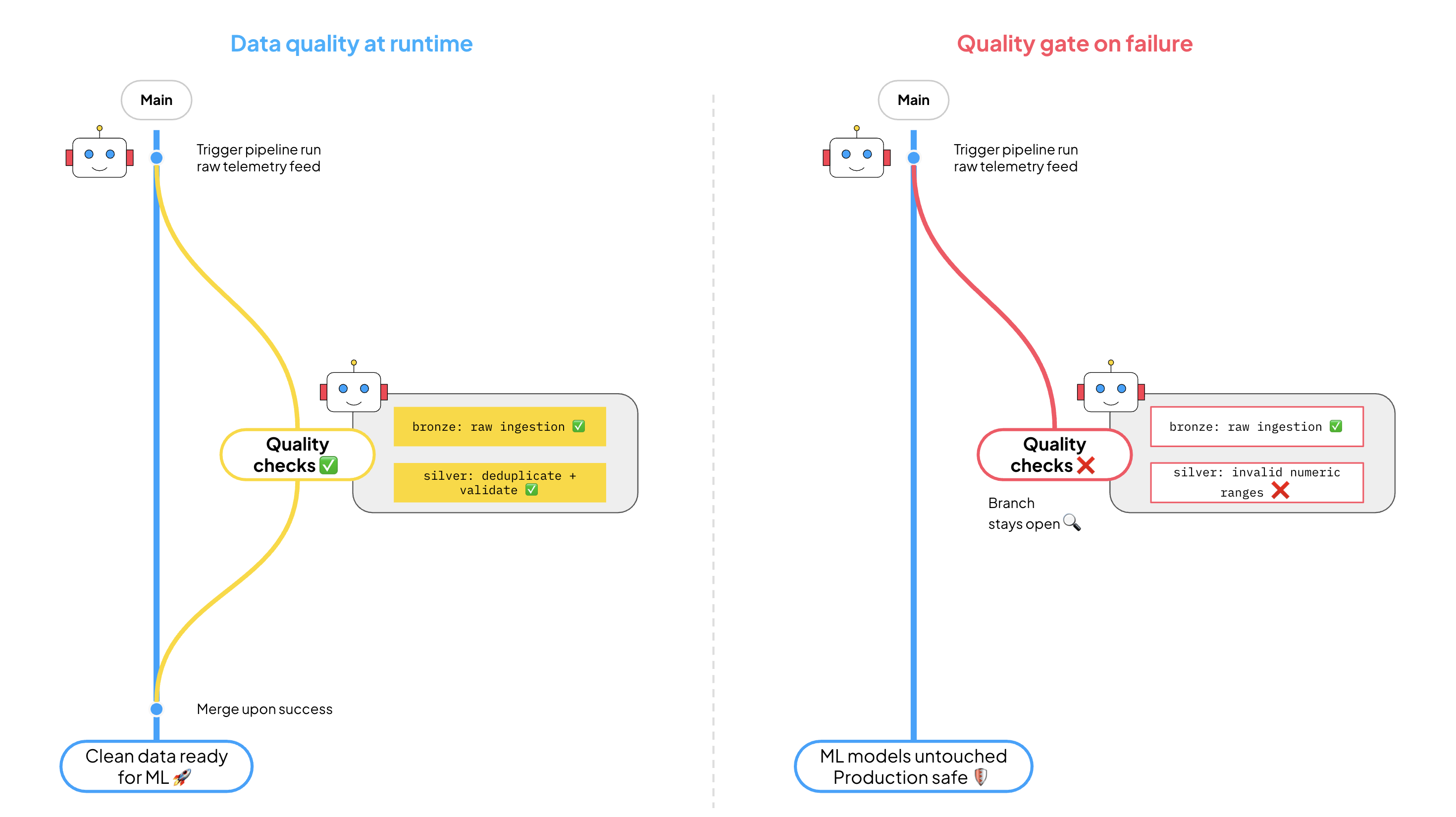

Correctness guarantees in production

Every Bauplan run (both at ingestion and transformation) executes automatically on a transactional branch, i.e. an ephemeral data branch which hosts in isolation all the changes within a run. If data quality checks fail, user code has bugs, or data contracts are violated, no change from the pipeline touches downstream systems, which effectively continue to read from a previous untouched snapshot of the data. Vice versa, if a run succeeds, all the transformations become available atomically to other systems, and the ephemeral branch is cleaned up automatically by the platform.

In other words, Bauplan guarantees that either all tables produced by a run are up to standard, or no change will be published, as if that run never happened. For Intella, this meant the team could onboard a new space agency’s telemetry data and keep improving pipelines while ensuring production assets stayed correct and trustworthy.

Try It Yourself

Inspired by the Intella pipeline, we have published an LLM-first version of the use case with a narrative format: instead of manually building the pieces of the stack, you progress by chatting with Claude using some predefined prompt. Try it here!